Introduction

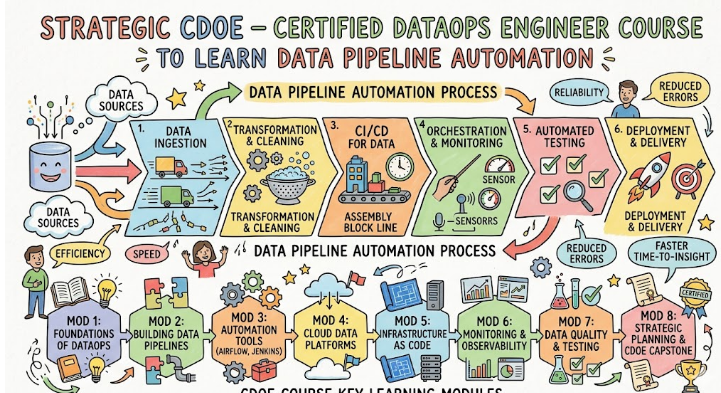

CDOE – Certified DataOps Engineer focuses on transforming traditional data engineering into a fully automated and reliable process using modern DevOps principles. It is designed for professionals who want to work in advanced data environments where pipelines must be fast, scalable, and error-free. The certification covers how to manage data workflows across different stages including ingestion, processing, validation, and delivery. It is especially valuable in industries where real-time analytics and data accuracy are critical. This guide helps professionals understand how DataOps is shaping the future of engineering and why it is becoming an essential skill.

What is the CDOE – Certified DataOps Engineer?

The CDOE – Certified DataOps Engineer is a professional framework that treats data quality as a continuous engineering discipline rather than a one-time cleanup task. It represents a move toward “Quality-as-Code,” where every data point is validated against technical and business rules in real-time. This certification exists to standardize the way organizations detect, report, and fix data anomalies before they reach the end user. It ensures that engineers have the technical skills to build observability into every layer of the data stack, from ingestion to consumption.

Who Should Pursue CDOE – Certified DataOps Engineer?

This path is essential for data quality engineers, analytics architects, and software developers who are tired of fixing the same data errors repeatedly. It is also highly relevant for compliance officers and technical leads in the Indian financial and healthcare sectors who must guarantee data accuracy for regulatory reasons. Managers overseeing large-scale data migrations or AI projects find this certification vital for reducing project risk. Whether you are an individual contributor or a leader, these skills are the key to moving from reactive firefighting to proactive quality management.

Why CDOE – Certified DataOps Engineer is Valuable and Beyond

The value of this certification lies in its focus on “Defensive Data Engineering.” In a future dominated by automated AI decision-making, the cost of “bad data” can lead to massive financial and reputational loss. CDOE provides the technical armor needed to protect an organization from these risks by teaching engineers how to build “circuit breakers” and automated lineage. Holding this credential signifies that you are an expert in data integrity, making you a critical hire for any organization that treats its data as a strategic asset. It ensures your career remains stable as companies prioritize “Trusted AI” over “Fast AI.”

CDOE – Certified DataOps Engineer Certification Overview

The program is delivered by DataOps School and is hosted on their official Website. The curriculum is centered on “Automated Validation,” teaching students how to build unit tests for data that run automatically within the CI/CD pipeline. The assessment requires candidates to design a system that can detect “silent failures”—situations where a pipeline runs successfully but the data inside it is incorrect. By passing this certification, a professional proves they can manage the complex technical requirements of maintaining high-integrity data at scale.

CDOE – Certified DataOps Engineer Certification Tracks & Levels

The curriculum is structured into Foundation, Professional, and Advanced levels, focusing on the progressive mastery of data integrity. The Foundation level covers the philosophy of “Truth in Data” and basic validation concepts. The Professional level focuses on the technical implementation of automated testing frameworks and observability dashboards. The Advanced level is dedicated to enterprise-wide data governance, federated quality standards, and complex lineage tracing. This tiered approach allows engineers to build a deep expertise in trust-based engineering incrementally.

Complete CDOE – Certified DataOps Engineer Certification Table

| Track | Level | Who it’s for | Prerequisites | Skills Covered | Recommended Order |

| Trust Core | Foundation | All Tech Roles | General IT Literacy | Data Quality Basics, Lean | 1 |

| Quality Engineering | Professional | Data Devs, SREs | Foundation Cert | Automated Testing, Observability | 2 |

| Integrity Arch | Advanced | Tech Leads, Archs | Professional Cert | Lineage, Federated Governance | 3 |

| Audit/Sec | Specialist | Security, Compliance | Foundation Cert | Immutable Logs, Data Masking | Optional |

Detailed Guide for Each CDOE – Certified DataOps Engineer Certification

What it is

This certification validates an engineer’s understanding of why data trust is the most important metric in a pipeline. It proves they know how to identify data quality issues before they become business problems.

Who should take it

It is ideal for anyone working with data who wants to understand the foundational principles of automated quality control.

Skills you’ll gain

- Mastery of the “Data Quality Dimensions” (Accuracy, Completeness, etc.).

- Ability to identify “Silent Failures” in traditional data processing.

- Understanding how to implement feedback loops between data producers and consumers.

- Knowledge of basic data profiling techniques to find anomalies.

Real-world projects you should be able to do after it

- Designing a basic data quality report for a small production table.

- Implementing an automated alert for “null” values in a critical data stream.

- Mapping out the “lineage” of a single data point from source to report.

Preparation plan

- 7–14 Days: Focus on the DataOps Manifesto and the core dimensions of data quality.

- 30 Days: Practice basic data profiling using open-source tools or SQL scripts.

- 60 Days: Review official case studies on data failures and take the foundational exam.

Common mistakes

- Assuming that data is “correct” just because the transformation code didn’t crash.

- Neglecting the business context of what “good data” actually looks like.

Best next certification after this

- CDOE – Professional level.

Choose Your Learning Path

DevOps Path

Engineers on this path focus on the technical “gates” in the delivery pipeline. They build automated tests that prevent “bad data” from ever reaching the production warehouse. The goal is a production environment that is “clean by design.”

DevSecOps Path

This path prioritizes the “Integrity and Privacy” of data. These professionals build automated audits that ensure data has not been tampered with and that sensitive info is always masked. They ensure that data trust includes both accuracy and security.

SRE Path

The SRE path focuses on the “Observability” of data health. These engineers build real-time dashboards that track data quality metrics alongside server performance. They ensure that data reliability is a visible and manageable part of the platform.

AIOps / MLOps Path

This path addresses the “Training Data Trust” challenge. Engineers learn to monitor for “data drift” that could cause AI models to make incorrect predictions. It ensures that the “brain” of the company is fed with high-integrity information.

DataOps Path

The primary path is for those who want to be the ultimate “Trust Architects.” They manage the end-to-end orchestration of quality checks across the entire organization. They are the leaders of a culture where data integrity is the top priority.

FinOps Path

This path ensures that the cost of data quality is optimized. Engineers learn to balance the cost of extensive testing with the business risk of bad data. They bridge the gap between technical quality and financial sustainability.

Role → Recommended Certifications

| Role | Recommended Certifications |

| DevOps Engineer | CDOE Foundation, CDOE Professional |

| SRE | CDOE Foundation, Certified Site Reliability Engineer – Foundation |

| Platform Engineer | CDOE Professional, CDOE Advanced |

| Cloud Engineer | CDOE Foundation, Professional |

| Security Engineer | CDOE Foundation, DevSecOps Track |

| Data Engineer | CDOE Foundation, Professional, Advanced |

| FinOps Practitioner | CDOE Foundation, FinOps Track |

| Engineering Manager | CDOE Foundation, Leadership Track |

Next Certifications to Take After CDOE – Certified DataOps Engineer

Same Track Progression

Upon mastering the engineering of data trust, the Advanced CDOE level is the next milestone. This involves mastering “Federated Quality,” where different teams manage their own quality while following central standards. It prepares you for roles like Head of Data Governance.

Cross-Track Expansion

Many trust experts choose to move into specialized data security or advanced cloud engineering tracks. Combining CDOE with a Professional Data Engineer or Security Architect certification creates a profile that can handle both the accuracy and the protection of global data assets.

Leadership & Management Track

As you move into management, the focus shifts to building a “Data-Driven Culture.” You will learn how to design the policies that reward high data quality and transparency. This track prepares you for “Chief Data Officer” or “VP of Analytics” positions.

Training & Certification Support Providers for CDOE

DevOpsSchool

DevOpsSchool provides a robust curriculum focused on the automated validation of data. They offer hands-on labs that simulate “dirty data” scenarios, helping engineers learn how to build resilient “circuit breakers.” Their instructors emphasize the practical technical skills needed to ensure data trust in real-world pipelines.

Cotocus

Cotocus offers advanced technical training that focuses on the architectural design of trusted data platforms. Their sessions are designed to help senior leads master the art of automated lineage and federated quality. They provide the deep technical knowledge required for the Advanced level exam.

Scmgalaxy

Scmgalaxy is a premier resource for community-driven learning and technical documentation. They provide a wide variety of tutorials on how to use version control and CI/CD to manage data quality standards. Their focus on the “how-to” of automation is highly beneficial for the trust track.

BestDevOps

BestDevOps focuses on providing clean, curated training for the modern “Ops” professional. Their modules are designed to help engineers understand the unique challenges of data observability quickly. They offer an excellent balance between quality theory and data engineering practice.

Devsecopsschool

Devsecopsschool is the leading provider for security-integrated engineering. They teach how to build “automated trust” into the data lifecycle, ensuring that data is both accurate and safe. Their curriculum is essential for any professional on the DevSecOps track.

Sreschool

Sreschool focuses on the reliability and performance of platforms, which is essential for consistent data quality. They help engineers apply SRE discipline to ensure that data observability is a core part of the infrastructure. Their training is the foundation for anyone managing high-integrity data systems.

Aiopsschool

Aiopsschool provides training for the future of AI-driven operations. They teach how to manage the data quality challenges unique to machine learning models. This curriculum is vital for engineers working in AI-first companies where data trust is a matter of business survival.

Dataopsschool is the official authority and primary provider for the CDOE – Certified DataOps Engineer program. They offer the most direct path to certification with official guides that focus on the “Trusted Pipeline.” Learning from the source ensures you master the core integrity concepts correctly.

Finopsschool

Finopsschool teaches the skills needed to manage the financial health of the trusted data platform. They show engineers how to optimize the cost of data quality checks while maintaining high standards. This training is essential for anyone responsible for data-intensive business budgets.

Frequently Asked Questions (General)

- How does DataOps ensure “Data Trust”?By automating quality checks at every stage of the pipeline, ensuring that errors are caught before they reach a human or an AI.

- Is this only about fixing broken code?No, it is about fixing “broken data”—situations where the code works perfectly but the values inside the data are wrong.

- What is a “Data Circuit Breaker”?It is an automated script that stops a pipeline if the data quality falls below a certain threshold, preventing the spread of bad info.

- Does the exam test my SQL skills?The Professional level tests your ability to use SQL and other tools to write complex automated quality tests.

- How long does it take to become an expert in Data Trust?Most professionals with a technical background can achieve the Professional level in 3 to 5 months.

- What is “Data Observability”?It is the ability to see the health of the data (quality, freshness, volume) in real-time through dashboards and alerts.

- Is this certification valuable in India?Yes, Indian tech hubs have a massive demand for professionals who can guarantee data quality for global clients.

- How does this relate to “Data Lineage”?Lineage is the technical map that shows where data came from, which is essential for verifying its trust and origin.

- Can I use these skills for small databases?Yes, the principles of data trust apply whether you are managing a small SQL database or a massive data lake.

- Does the certification involve manual auditing?The program focuses on automated auditing, where the system checks itself for errors constantly.

- How do I keep my certified trust status active?Renewal typically happens every two years through continuing education or by progressing to the Advanced level.

- Is there a focus on Data Governance?Yes, but it is “Automated Governance” that happens inside the code, rather than a slow manual review board.

FAQs on CDOE – Certified DataOps Engineer

- How does the curriculum handle “Data Drift” detection?It teaches technical patterns for comparing current data distributions with historical norms to find anomalies.

- What role does “Immutability” play in data trust?The curriculum covers how to use immutable logs to ensure that data has not been changed without a record.

- Will I learn about “Truth Segregation”?Yes, the program teaches how to separate raw data from “trusted” data zones to ensure consumers only see high-quality info.

- How are “Quality SLOs” different from Uptime SLOs?Quality SLOs focus on metrics like “Accuracy Rate” and “Null Percentages” rather than just the server being “Up.”

- Does the training cover real-time quality checks?Yes, the Professional level includes patterns for validating data as it flows through streaming platforms like Kafka.

- How does CDOE address “Broken Lineage”?It teaches how to build automated metadata collectors that ensure the map of the data is always up to date.

- Is there a focus on “Root Cause Analysis” for Data?Yes, the program teaches how to use lineage and observability to quickly find the source of a data error.

- Is this more about “Testing” or “Architecture”?It is a balanced approach that teaches you how to design a “Trusted Architecture” and then test it with automation.

Conclusion

From my perspective as an expert in high-stakes engineering, trust is the only currency that matters in data. A fast pipeline that delivers wrong data is worse than no pipeline at all. The CDOE – Certified DataOps Engineer is specifically designed to make you the guardian of that trust. It is arguably the most valuable certification for anyone who wants to work in fields like Fintech, Healthcare, or AI. It transforms you from a “data mover” into a “data guarantor.” This shift in role is exactly what leads to senior leadership positions. By mastering the art of automated data trust, you are not just passing an exam; you are ensuring the future of your organization’s digital strategy. Start with the foundation, master the observability labs, and build a career that everyone in the business can trust.